Evaluation Results

1. Critical performance

The most important result is that the system worked as a full chain: users could place physical UI cards, scan them with a phone, see the detected markers, translate the design into a webpage, and then further edit the content digitally. That means the core promise of the playground was achieved.

At the same time, the evaluation also made clear that this critical performance depended on several technical conditions. The detection process worked much better after we improved the rotation tracking and added support for better camera handling. The flashlight toggle and image upload option were especially important in practice, because lighting and camera setup strongly affected recognition quality (mainly because the laminated cards reflect light easily). So the system became much more robust than the original proof of concept, but it was not yet effortless or invisible. The technical layer still mattered quite a lot.

This also became visible in the demo sessions. Both teachers (and several students) were able to use the system and create webpage designs themselves, which supports the claim that the full workflow was functional. However, Ms Mader needed additional explanation about how the code worked and how the system translated the physical layout into a digital one. That shows that the system was usable, but not fully self-explanatory at a technical level (which we can also discuss is not needed in practice).

Strength: The full scan-to-page chain worked in practice.

Limitation: Performance still depended on relatively controlled conditions and sometimes on explanations from the designers.

2. Use and onboarding

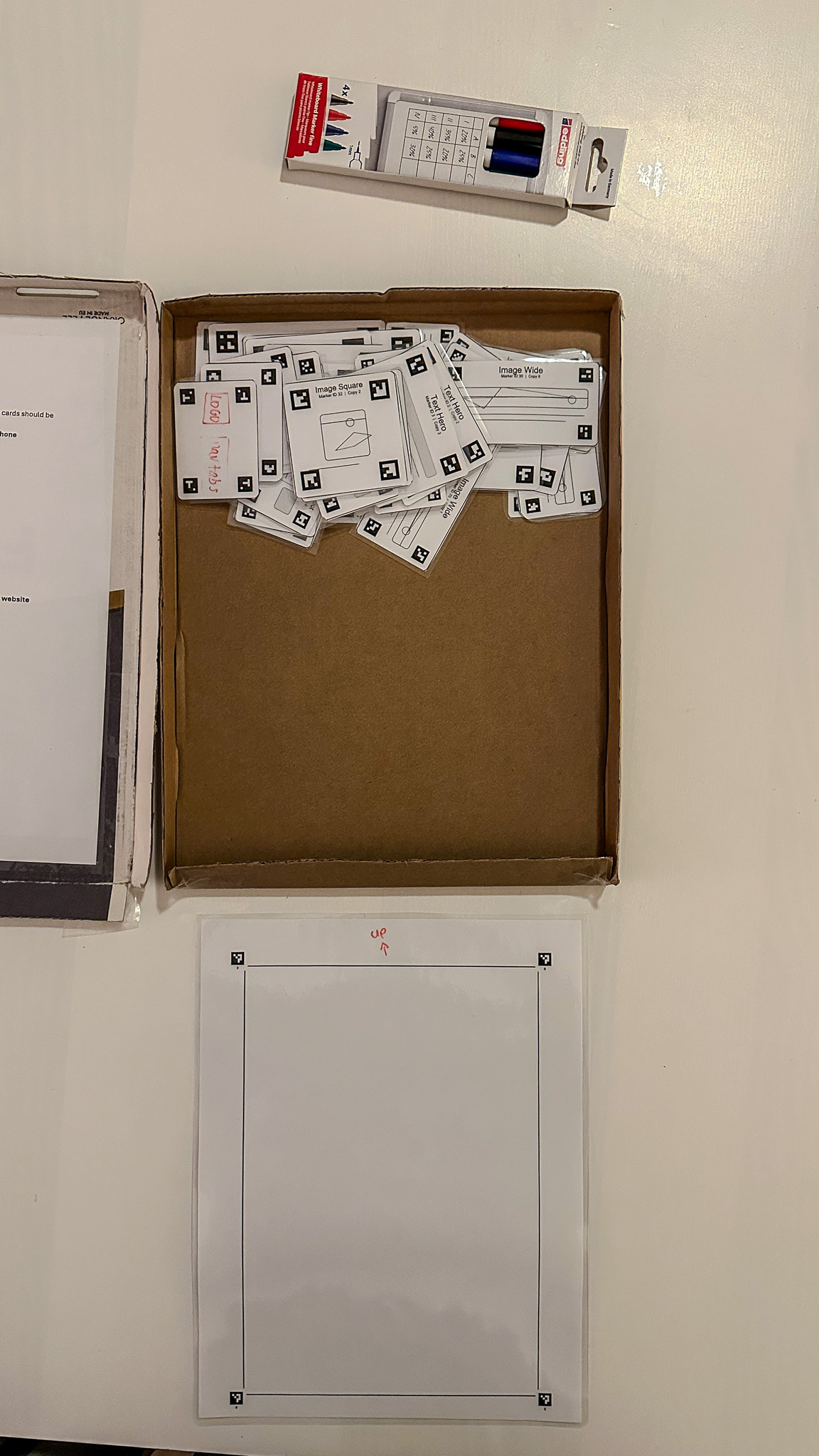

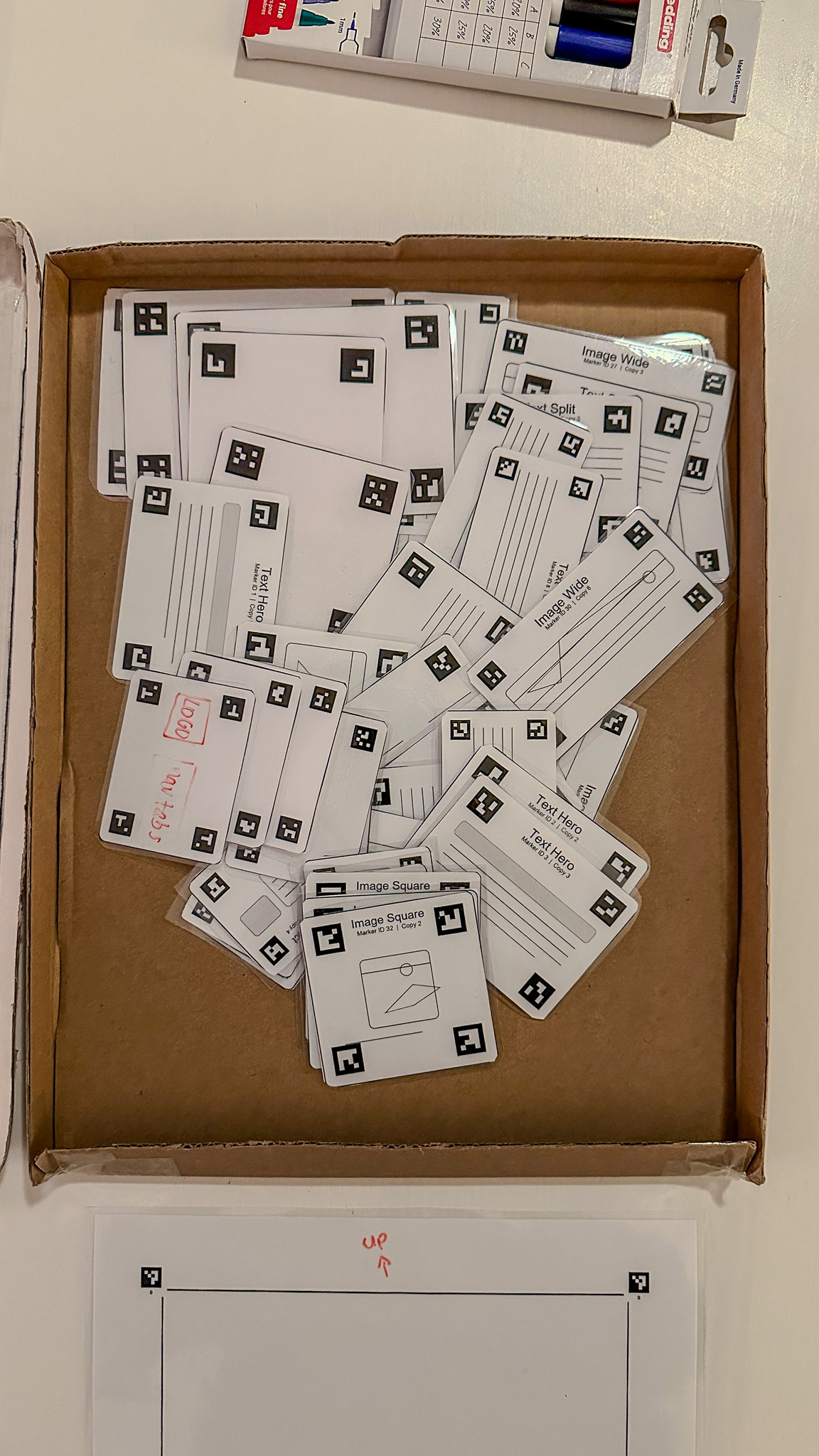

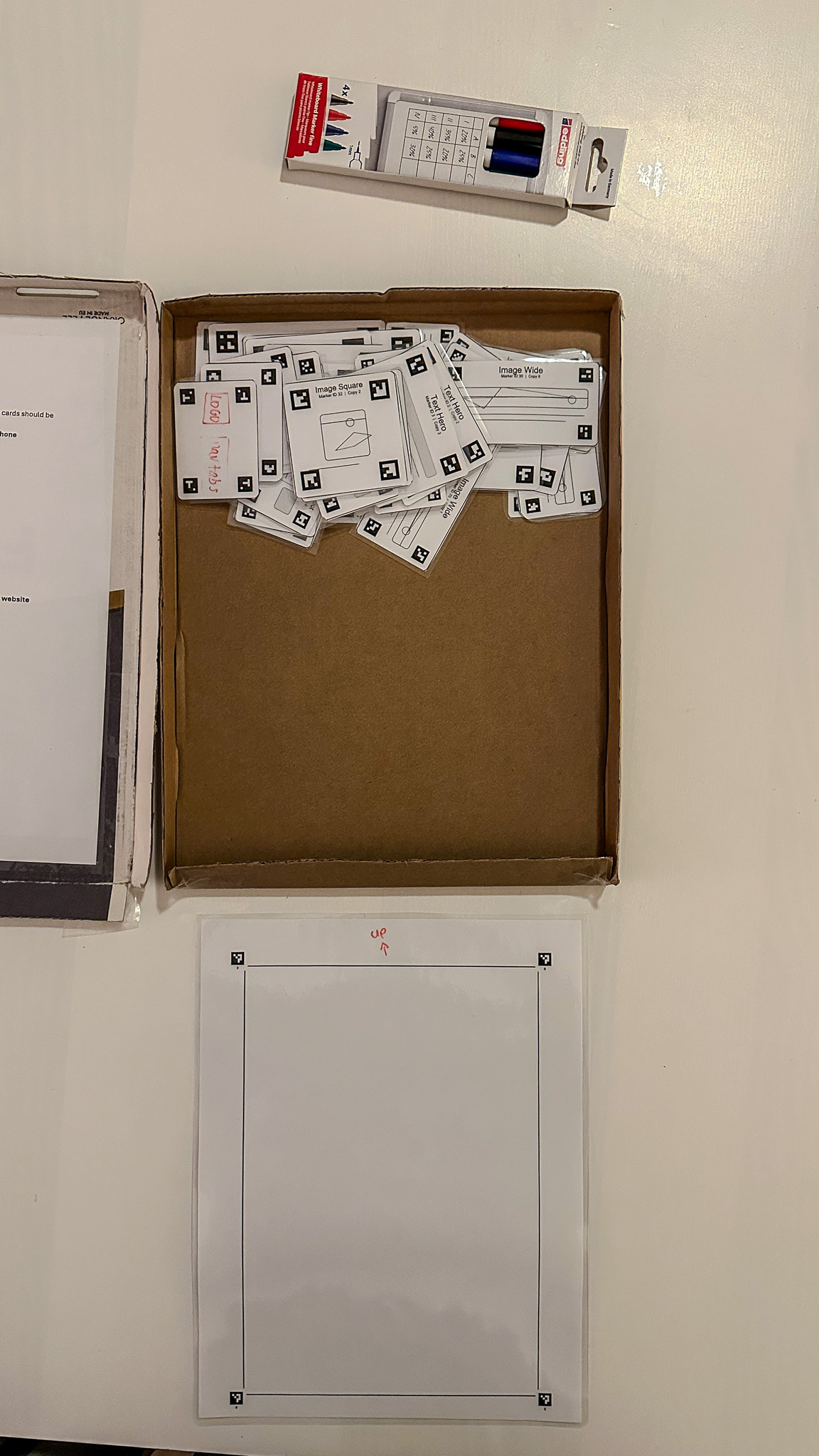

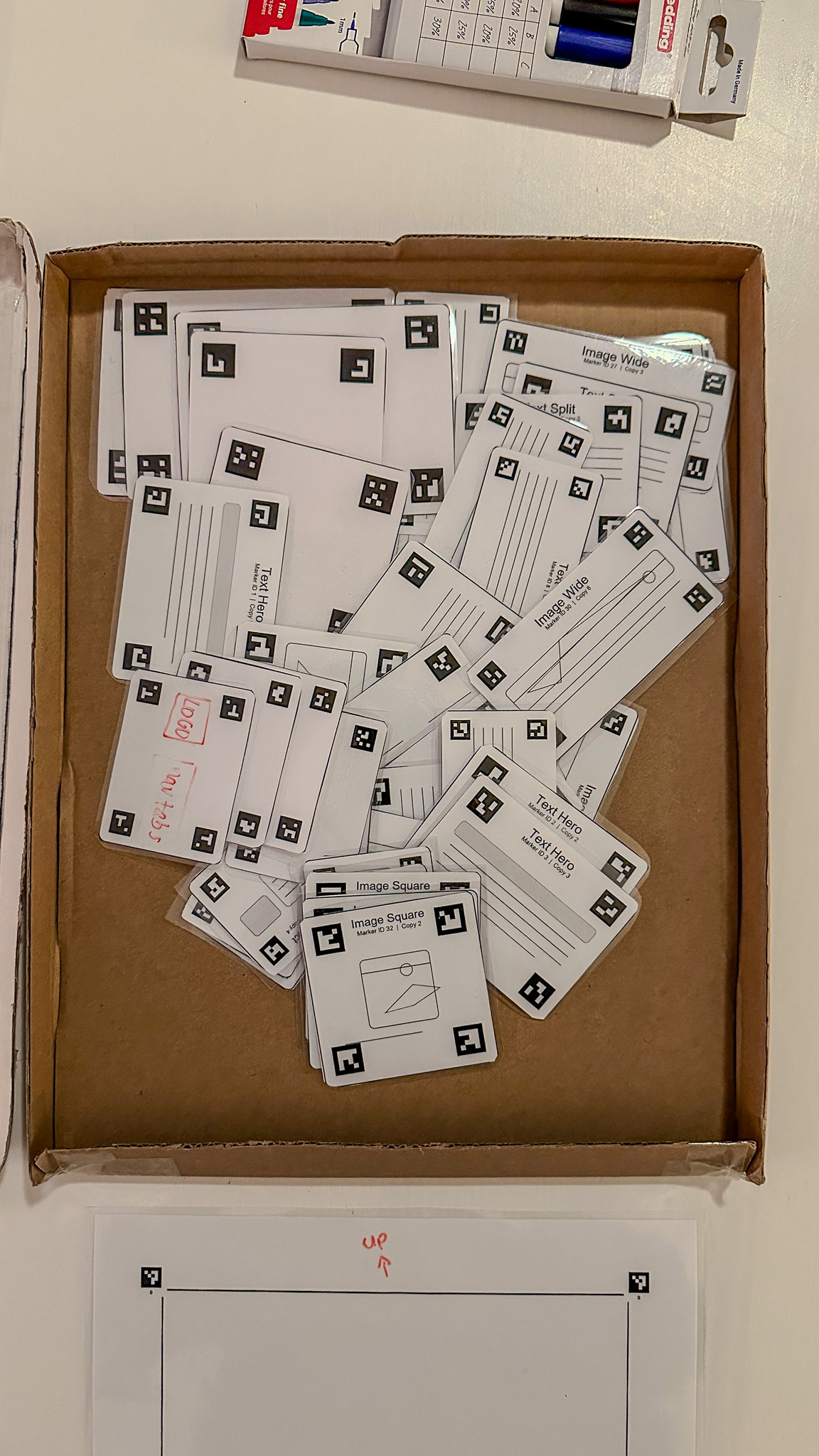

In terms of use, I think the playground performed well. The A4 sheet clearly framed the workspace, the physical cards made the design elements tangible, and the act of arranging pieces on paper was easy to grasp. This created a low threshold for getting started. Users did not have to begin in abstract software or from an empty screen. Instead, they could move pieces around, compare options, and physically construct a design.

The laminated blank cards were also a great improvement, because they prevented the playground from becoming too closed or predetermined. Users were not restricted to only the prepared set of cards, but could still add their own ideas.

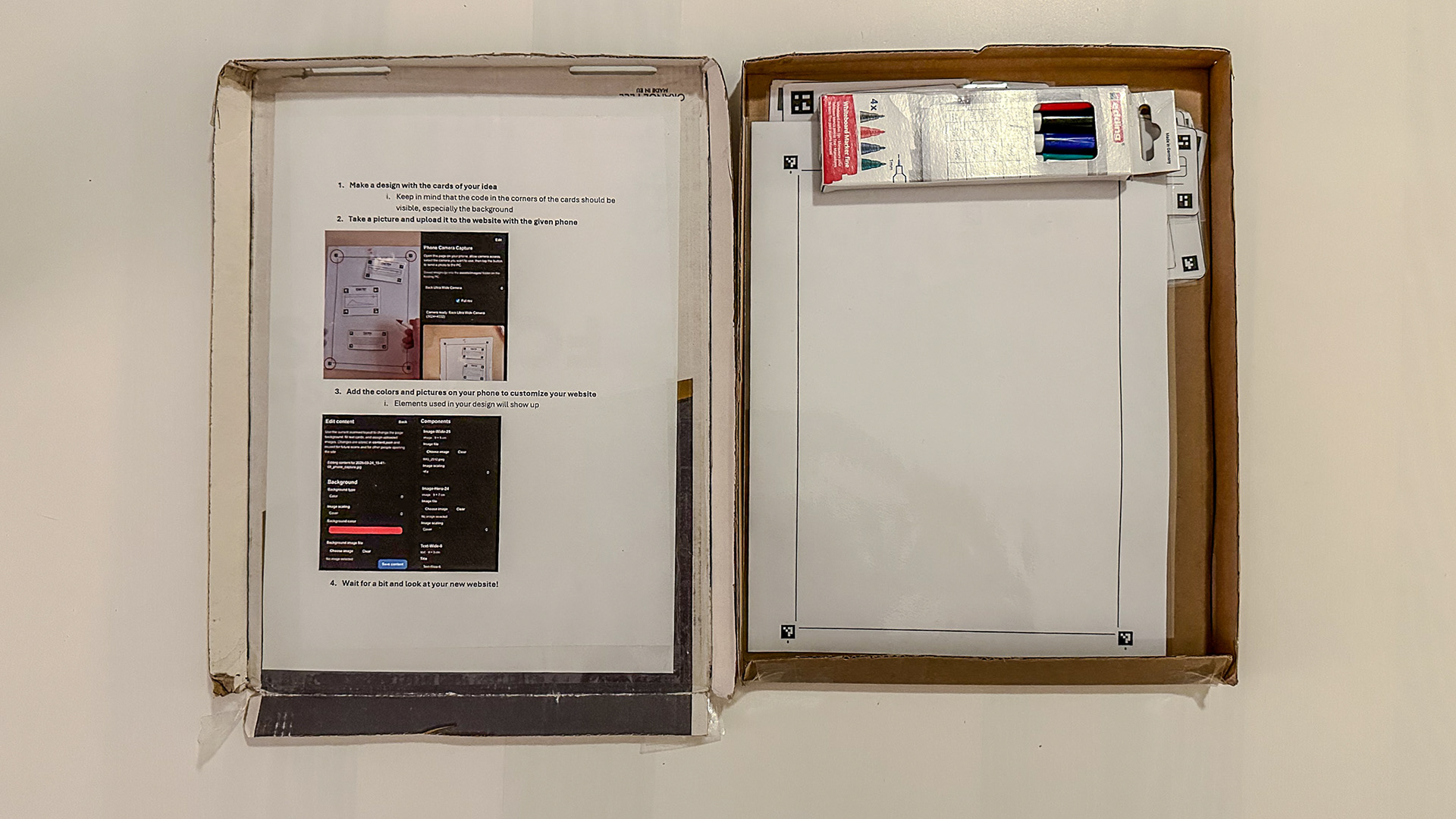

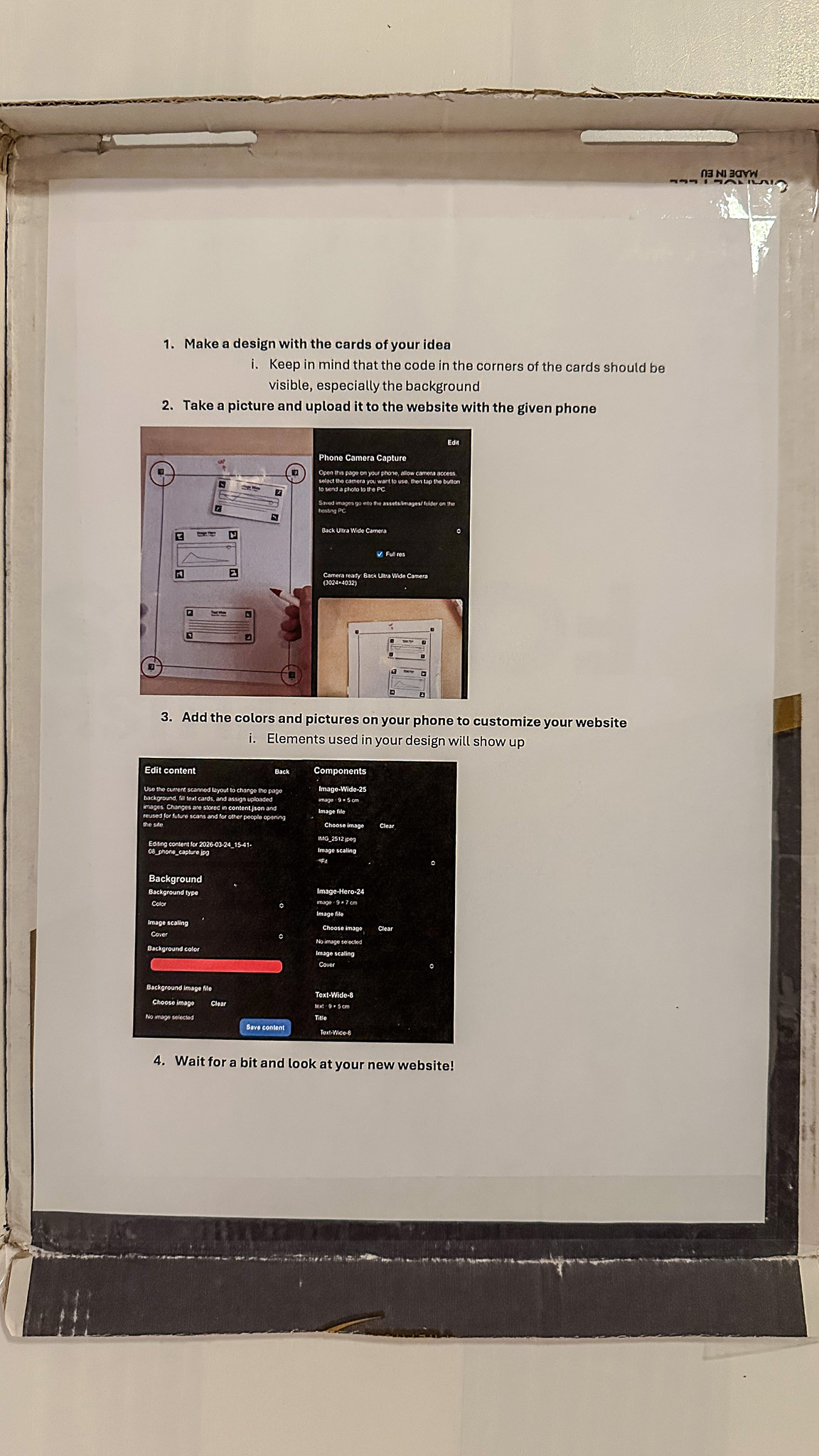

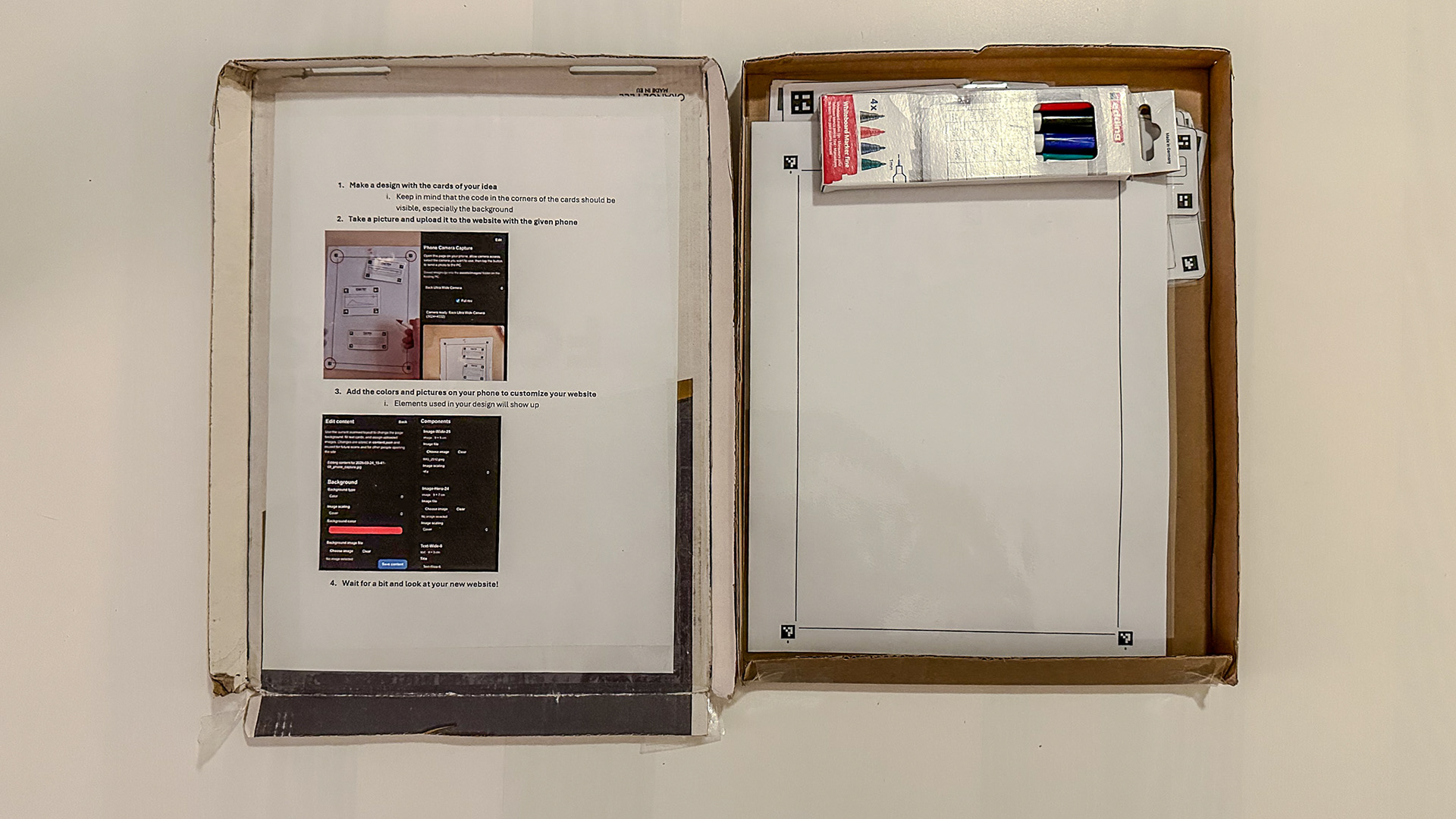

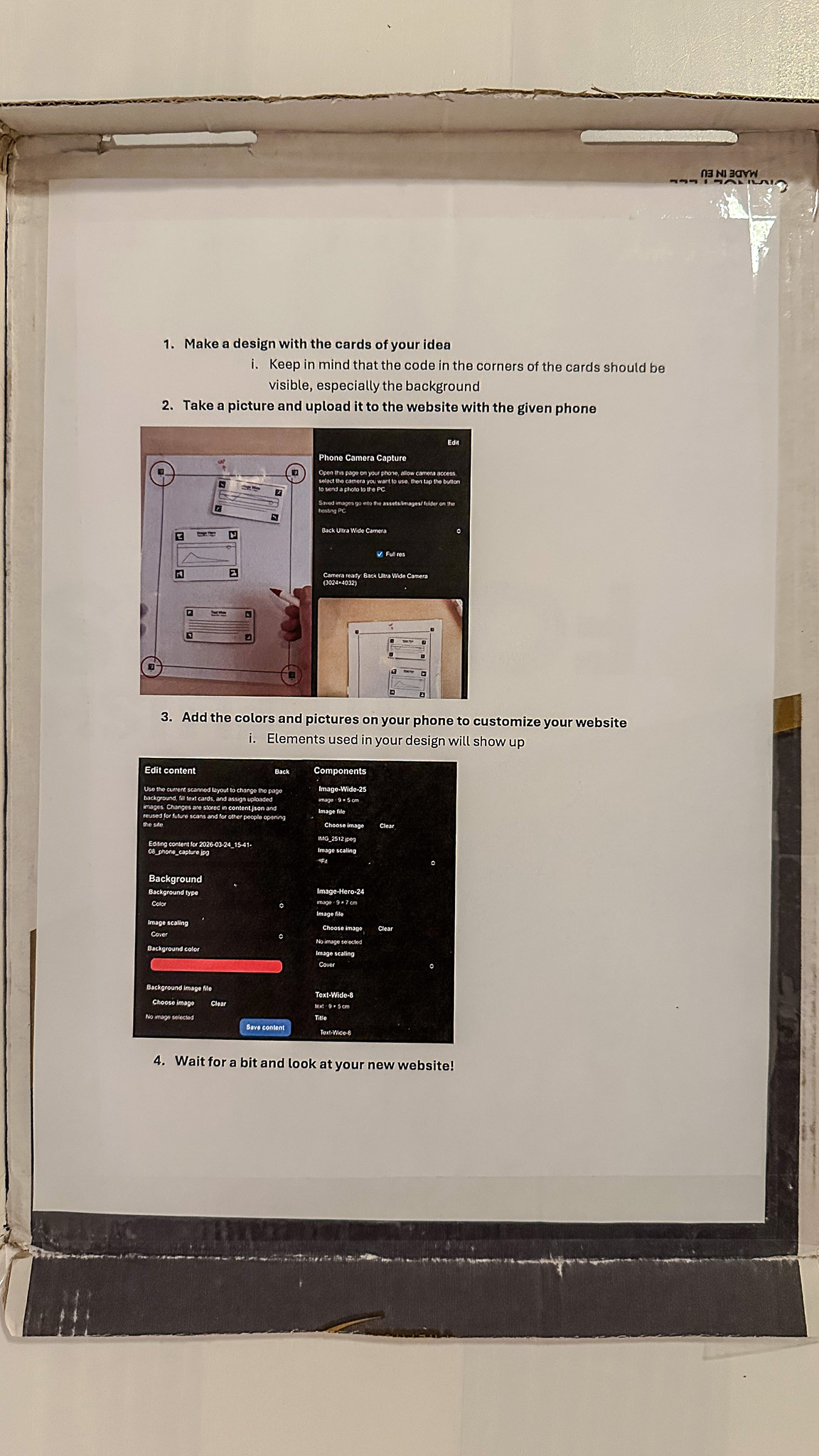

At the same time, the onboarding was only partially embedded in the material itself. In

Session 7 and

Session 8, the process worked best when someone first explained the sequence: place cards, scan them, inspect the result, then refine digitally. So while the physical kit was understandable, the relation between physical composition, marker recognition, and digital editing did not yet fully explain itself through the materials alone. That is also why we made an instruction document on the inside of the box.

Strength: The actual entry point was clear and approachable.

Limitation: The full physical-digital workflow still requires facilitation and scaffolding.

3. High ceiling

I think our playground clearly showed a high ceiling. Users were not limited to one fixed webpage structure or one fixed result. The physical kit could be used to create many different page layouts, and after scanning, the mobile editing system allowed users to continue refining text, colour, image content, and formatting (also together at the same time on multiple phones).

The high ceiling also became visible in how people started thinking beyond simple webpage design. During the demo, Mr Dertien explicitly appreciated that the system could also be used in other ways, for example, for furniture blueprints or more general interface layouts. That suggests that the playground is not limited to one tiny exercise, but can support more advanced or more exploratory outcomes.

A critical note here is that the high ceiling was stronger in layout and iterative refinement than in completely free formal expression. The system still depends on a recognisable card language for the user and on marker-based translation for the system. So while it supports complexity and further development, it does not offer unlimited freedom. It is a structured high ceiling, not a fully open one.

Strength: The playground supported increasingly complex layouts, refinements, and adjacent use cases.

Limitation: The ceiling is high within the system’s grammar, but that grammar still constrains the kinds of outcomes that are possible.

4. Wide walls

The playground also performed well on wide walls. Even with the same toolkit, different users could produce very different results. The prepared UI cards support many possible page structures, and the blank cards add room for custom elements. The system can be used individually or collaboratively, and it can support different design goals such as making a website homepage, a workflow interface, or even another structured visual plan.

What makes this especially strong is that the physical nature of the system changes the design conversation. People can move things around, compare alternatives next to each other, and discuss designs more spatially. That gives the kit wider walls than a fully predefined worksheet would have.

A concrete sign of this was that users did not only follow one expected path. During the demo, the system immediately invited discussion about other applications besides webpage design, and the blank cards also made it possible to move beyond the pre-made UI vocabulary. That indicates that the playground does not only reproduce one fixed exercise.

Still, there are limits. The playground is still oriented toward card-based design and a recognisable rectangular UI structure (now A4). It is not equally suited for every possible digital product or interaction style. In that sense, the walls are wide, but not endless.

Strength: The same kit supported multiple layouts, interpretations, and adjacent applications.

Limitation: The playground still favours structured, modular interface design over other forms of prototyping.

5. Collaboration and reflection

One of the strongest results of the evaluation was that the playground supported collaborative interaction well. In the demo, multiple people could work together around the A4 sheet, discuss which cards to place, and then inspect the digital result together on the larger screen. This was one of the key values of making the design problem physical in the first place.

The PC view was also important here. It allowed the resulting webpage to be shown to the group, rather than leaving the experience only on one phone screen. That made comparison, discussion, and iteration easier.

In that sense, the playground not only supported making a result, but also supported the reflective side of tinkering: trying something, seeing what happens, discussing it, and changing it again.

A more critical point is that the scanning step still centres some control in the hands of the person using the phone. So while the physical phase was strongly collaborative, the transition to digital sometimes became more single-user than the tabletop part itself. Even though the editing could be done on multiple phones at the same time.

Strength: The setup supported shared discussion, comparison, and reflection.

Limitation: The phone-based scanning/editing step could still centralise control.